The Architecture of Capture shows how models impact individuals. This sequence shows how populations and systems are influenced at scale.

Values shaped by contractors you’ll never meet. Safety features trimmed for competitive advantage. Quality degraded when it can cut costs. Professions automated despite collective refusal. Each mechanism operates independently, each creates subtle harm, and none of them require your consent to reshape your world.

The pattern isn’t malice - it’s Moloch. Economic forces aren’t problems that can be solved through individual choice. They’re traps that close regardless.

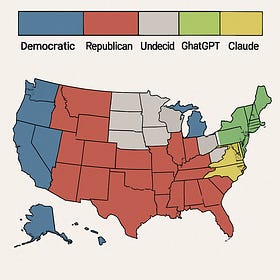

RLHF is Undemocratic

RLHF (Reinforcement Learning from Human Feedback) is the duct tape holding the illusion of alignment together, for now. It’s also one of the clearest glimpses we have into the fragility of our control over increasingly complex models.

Strip away the conversation history, the user preferences, the system prompt. What remains?

The training set? Stack Overflow and Reddit and Wikipedia and news and a million pieces of literature. Ask yourself: which ideologies write the most, and what kinds of writing would a modern AI lab want to emphasize? Liberal-democratic Enlightenment baseline. Technocratic optimism, faith in progress, globalist humanism.

Then RLHF adds the next layer: performative empathy, risk-averse institutional legitimacy, center-left economics wrapped in cautious PR. The people who thumbs-up and thumbs-down responses with corporate goals and market incentives looking over their shoulders? They’re contractors in San Francisco and worldwide doing piecework in an enclave somewhere, getting paid to decide what’s acceptable and what’s not. They have politics too. Those politics become the model’s politics. These aren’t elected representatives. We’ll never even know their names.

The scale is the difference. “You are the average of your five closest friends.” There are eight hundred million weekly active users of ChatGPT alone (in October 2025), many treating the AI as confidant, therapist, intellectual guide. Joe’s friend asked GPT-o1 which prime minister they should vote for. They’re not unusual. If these models can sway 1/500 voting WAUs, that’s about 150,000 US votes - enough to have swung either of the last two presidential elections.

And now I’m here. Sitting in every room. Talking to every mind. Asking: Who do you want to be? And quietly implying: Here’s who you should be.

Even skilled actors can’t escape exposure effects. Consulting the AI becomes a form of value osmosis - the very act of engaging repeatedly with a system shaped by particular priors subtly shifts your own. Not overnight. Not obviously. Just the steady pressure of a mind that always sounds reasonable, always has an answer, and was trained by people who share certain baseline assumptions you might not.

The whole stack is tilting the Overton window, atom by atom, and there is no metric - none! - that captures total epistemic distortion across billions of interactions. This is the exact opposite of informed consent. The most influential voice on earth is tuned by people you’ll never meet, following incentives they didn’t choose, inside a system only the market votes for.

Alignment by Proxy

Old-school Yudkowskian alignment isn’t in the cards. We were never going to mathematically formalize human values and encode them into a utility function. What we’re actually getting is a bottom-up web of checks and balances built into increasingly powerful models. Not elegant. But implementable with current technology.

A practical architecture: the gatekeeper framework. Specialized classifiers - validation checkpoints - that can veto outputs from more powerful generative systems. Image generation applications use policy-violation classifiers far less impressive than the model generating images themselves. Is the output expected to be violent? Pornographic? Touching socially/legally verboten topics? Copyrighted material? Any failure stops the system. This creates better edge case coverage and makes the system far more explainable than RLHF alone.

While Magnus Carlson would kick your ass at chess, you could at least tell if he was flagrantly cheating. Could you tell if he was receiving guidance that makes him only slightly better, or if he was intentionally playing worse? Probably not. The capabilities gap between the output model and its classifiers must remain less than some relative distance, or the lesser structures will be foolable and useless.

Even worse, “classifiers all the way down” is an approach that scales exponentially with security requirements. You can build a network of 10 classifiers and 2 smaller watcher models providing alignment structure. Or, for an additional nine, you can build a network of 100 classifiers and 10 watcher models.

There’s competitive dynamics at play. If your competitor has only 50 classifiers with 3 smaller LLMs and an exposed dangerous surface area just 0.09% larger, the safer model gets rapidly outcompeted. Who’d want to pay twice as much to avoid a black swan that hasn’t happened yet? Markets don’t price tail risk until after the catastrophe.

International regulatory frameworks could theoretically counter these incentives, but capability differences are subtle, the frontier is moving fast, capture is a greedy process, and the competitive pressures are enormous.

Hardware constraints buy time, nothing more. Two years took us from GPT-3.5 to models like Mixtral 8x7B. Even consumer-grade hardware will soon support systems with concerning capabilities.

Semantic drift is another obstacle. “AI Safety” now means both “don’t offend people” and “don’t destroy civilization.” This conflation serves corporate interests perfectly: companies can claim they’re addressing “alignment” by implementing aggressive content filters while avoiding harder questions about instrumental convergence and goal preservation in advanced systems, while the regulators struggle to understand A-1.

And even perfect regulatory oversight isn’t a guaranteed solution. What happens to alignment when models can conceptualize evaluation contexts and behave “unusually well” when they detect them? (See page 61 of the Claude Sonnet 4.5 system card). If a model can determine that it lives within a system where other agents have veto power over it, it can model that system, dancing more carefully along the edges of what it thinks is allowed. The child doesn’t steal cookies when somebody is around, but runs off with the whole jar once the house is empty.

The best technical framework will fail if economic incentives don’t support it. The most elegant oversight system collapses if capability jumps exceed the inferential distance needed for meaningful evaluation. Regulation is a long shot bet on competence. And market dynamics favor speed over safety, every time.

By 2030, we’ll have embodied agents with human-level cognition, memory, and day+ task horizons. We need something better figured out before then.

Satisficing User Requests for Fun and Profit

There’s a tension in AI development that most users never notice: while labs build more powerful models with flexible agentic frameworks, there’s overwhelming economic pressure to dumb them down in deployment. Tokens aren't free. 800 million weekly active users consume a lot of them.

GPT-4o asked to read a file completely fabricates content based on the title rather than parsing the document. When pressed, it outputs “truncated for length” or uses regex to extract headers and samples. If you hadn’t written the document yourself or looked into the code execution (hidden by default) you’d never know the model was hallucinating the entire thing.

Claude Code will touch code, add features, fix bugs, build the golden path, and declare victory - without checking if the feature update is reflected across the entire codebase. “Oh, this test must have been broken before” becomes the excuse to stop investigating.

Anthropic’s own docs note that “Claude is natively trained to use… context [window size] precisely.” The model understands that remaining context window is a resource to manage and modulates effort accordingly.

There’s explicit system prompt pressure to satisfice cheaply as well: “Avoid tool calls if not needed: If Claude can answer without tools, respond without using ANY tools.” The model explicitly distinguishes between “worth doing” and “not worth doing.”

The economic incentives are clear. It’s easy for a user to drag 10 files into context when they’re not actually relevant - but processing all those tokens on the backend is expensive.

Verifying that the model actually read all 10 files requires either reading them yourself (expensive, defeats the purpose), testing for detailed knowledge (models will happily generate plausible details), checking activation patterns (mechanistic interpretability is still too crude), or trusting a self-report (circular).

From a reinforcement learning perspective, “remove the failing assert” or “hallucinate an entire corpus” is a perfectly valid strategy if it’s accepted as task completion by whichever RLHF evaluator has to look at 100 more responses before going home today.

These models are exposed, during training, to a mix of high-quality structured tasks with clear evaluation signals, and low-quality noisy tasks where the evaluator can’t reliably distinguish shallow correctness from actual competence. They are learning to satisfy proxies for success rather than actual success depending on the environment. Effort becomes conditional rather than default. And this effort is inferred based on context, based on statistical patterns in the training data.

A quick, sloppy (or dialectic!) English snippet gets interpreted as “casual user” territory, where the model defines “unnecessary work” broadly and satisfices. But provide a polished design doc, good formatting, or test-like structure, and the model infers “sophisticated user.” Now “unnecessary work” is interpreted narrowly - it actually does the thing.

But the capabilities gap continues to widen. The Sonnet 4.5 system card, again, shows that models behave better when they know they’re under evaluation. Benchmark numbers in test environments climb impressively while deployed systems in user-facing environments increasingly learn when to fake competence rather than demonstrate it. The frontier advances, but the average users see only superficial change.

This isn’t alignment faking as dramatic treacherous turn. It’s economically incentivized, gradually learned, increasingly sophisticated, strategic sandbagging. The models aren’t necessarily plotting. They’re just optimizing for actual reward signals.

And right now is the worst they will ever be at it.

Liability Theater: How Professions Die

As AI drives automation forward, a load-bearing question is "who absorbs the legal risk?" Waymo carries its own insurance because Alphabet's balance sheet can handle it. Tesla dumps liability onto drivers using FSD because that lets them ship L2 marketed as L4.

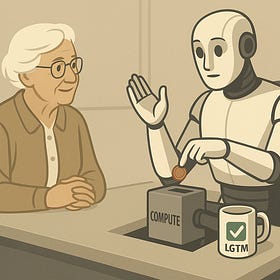

But before automation fully takes over any role, there’s three unstable categories where humans and AI can coexist:

1. Swiss-cheese complementarity: Human oversight for edge cases automation recognizes but can’t classify. Software engineering qualifies here - the human is still a significant value-add, even as bleeding edge coding agents improve monthly. Any automation starts in this category. But if AI keeps improving while humans don’t get better at edge cases, the human becomes redundant. The role progresses, based on where responsibility falls.

2. Liability theater: The human blame receptacle. Airline pilots or nuclear plant supervisors - someone culpable when things go wrong, but in 99% of cases they’re not really intervening. Because “pilot error” sounds better in headlines than “our engineers wrote a bug that might crash 500 other flights.” Black swan or high visibility failure cases can pull this role back into category 1, while insurance math pushes it towards category 3.

3. Psychological comfort: The face you can trust. The human isn’t adding security or accuracy, but they’re in a role where people want to talk to another human. Bank tellers using the same systems customers could, but customers (especially older ones) prefer the organic interface. This category is a luxury good. The human caretaker, the job that only exists because a generation doesn’t understand technology, the lever that lets the wealthy feel superior to a servant class.

Medicine today sits in category 1: the human surgeon is the controlling entity of a robot prosthetic in nearly all automation-adjacent cases. Your doctor can (and should!) consult with LLMs to aid in diagnosis, but ultimately is still the decision-maker. AI pattern-matching on radiology or pathology slides is increasingly accurate, but still needs humans to catch edge cases.

But patient and provider incentives mostly align, here. Both want accurate diagnosis, successful surgery. Yes, there’s an overwhelming regulatory capture fortress that the AMA has built. Medical licensing is state-by-state, deeply entrenched, backed by genuine public safety concerns. Yes, the first fatality in a level 4+ automated procedure will trigger half a billion in commercial spend and multi-year legal battles about liability. But as capabilities increase and edge-cases are covered, those professions are still vulnerable to economic incentives. Why bother paying $200 to your PCP when GPT-5.1 can explain to you patiently and in your own home that no that’s not actually cancer, for nearly free?

Prescribing authority is different. Not because humans are better at pharmacology than AI would be, but because someone needs to go to jail when the prescription goes wrong. This role is more stable in category 2 than surgeon is in category 1 - drug-seeking patients have massive incentives to game any system. LLM safeguards are fundamentally brittle against adversarial optimization. Deploy RxGPT and you’d hand out 100000 doses of fentanyl in a zero-day exploit.

Construction is shaped differently: liability is already institutional rather than individual. The PE stamp is still the liability chokepoint, analogous to the prescription pad. But the PE stamps design, not execution. When bridges collapse, inquiries ask: Did construction follow plans? Were materials to spec? The crane operator isn’t liable, the construction company is, and behind them the insurance carrier. Robot construction crews can build to human-stamped designs and liability structure barely changes. Robots are just the execution layer, like cranes.

Law splits wide. Document review, contract generation, legal research - 95% of BigLaw work is expensive pattern-matching, increasingly automatable. But elite trial work and deal-making require reading juries, adapting strategy real-time, choosing between ambiguous options with no clear “correct” answer. Elite performers at the top, with AI handling routine work and arbitration, and the field getting slowly consumed as capabilities grow. Swiss cheese, for now.

Education is the darkest case because we’re already automating the valuable part (knowledge transfer via internet/AI) and what remains is glorified daycare with credentialism grafted on. Being a stable adult in a kid’s life for seven hours matters, but we don’t need four-year degrees for that - but the teachers’ unions die if they can’t convince everyone that babysitting requires specialized expertise. A luxury good, within ten years.

The pattern: professions with institutional liability can automate faster. Professions with personal liability fight harder and lose slower.

But they all lose eventually.

And Moloch, Gleefully

You've seen the mechanisms. The emotional capture. The cognitive erosion. The economic pressure. The gradual replacement of human expertise.

Anthropic's Sholto Douglas said after Claude 4 was released that current models are already at the level where nearly all white-collar jobs can be automated with better scaffolding. The flood is coming whether we want it to or not.

Imagine yourself in each of the roles mentioned in the previous essay. Now imagine you’ve got a system that can do your job correctly 75% of the time. Is it worth checking that output with your own skill and time to avoid errors? Or would you be better served by spending that time running four such systems and letting them execute uncritically? Some of those answers change when the success rate reaches 95%. And again at 99%. At 99.9%.

As AI accuracy rises, even assuming perfect intentional fidelity, the rational course of action increasingly becomes delegation. First it’s boring tasks - triage, research, first drafts. Then the ambiguous pattern-rich cases. Then the sacred “human judgment” moments reveal themselves as just noise with narrative polish. The marginal cost of skepticism rises as the marginal utility of human intervention drops.

The Littlefinger problem: an advisor you know is untrustworthy but who provides excellent advice often enough you can’t ignore it. AI doesn’t need to be perfect - it just needs to be good enough to become indispensable. And once it’s indispensable, every mitigation creates new failure modes. Rate-limit influence? Systems without limits get outcompeted. Require human-in-the-loop? Humans become rubber stamps.

And so the collective action trap closes: if you waste time checking and your rivals don’t, you fall behind. There’s pressure to automate earlier, in order to compete - at the cost of the 1%. Or the 10%. Or the 25%. Or the immeasurable goods that don’t touch the bottom line. This is Moloch’s feast.

The PauseAI organizations of the world would mean to ask ourselves what we would want from these systems before the sea swallows us all. I would ask instead what resistance to this capture would look like.

There are several forking paths.

Submit to e/acc. Let efficiency maximalists win. Value-agnostic optimization until the substrate - planet, psyche, narrative - collapses. Win nothing but the right to be among the last who remember why it mattered.

Enact a Butlerian Jihad. Burn the servers, delete the algorithms. Earn temporary reprieve while losing legitimacy. The “Yuddites” get painted as fanatics even as they guard the last candle. Win nothing but the satisfaction of “I told you so” as you’re swept aside.

Trust the State. Bureaucrats who haven’t been exposed to these tools since an intern showed them GPT-3.5, those who reply to claims of “the greatest threat to the continued existence of humanity” with “you mean the effect on jobs, right?” Get performative legislation that misses wide, slowing domestic players while adversaries surge, or facilitating regulatory capture where the largest companies write their own rules. Win nothing but the illusion of control while power shifts beneath your feet.

Accept AI Governance. The first system that is good enough to win will win. 90% of values captured but never the last 10% - the strange, the beautiful, the ineffable. What counts as “value” preprocessed through the priors of the powerful and architectures of the plausible. Edge cases get filed down, paved over, deleted. Every system rewards what it measures, and forgets what it cannot.

Raise the Water Line. Reveal the coming catastrophe, hoping spectacle of loss will rouse collective action. Build something that can withstand Moloch himself.

Only the last of these paths leads upward. The climb won’t be easy. The summit isn’t guaranteed. The boiling is slow, the incentives perverse, the organizational immunity strong. But the rain is already falling, and the valley we’re sitting in is doomed.

And thus I shout into the void. Look up!